What would a behind-the-scenes look at a video generated by an artificial intelligence model be like? You might think the process is similar to stop-motion animation, where many images are created and stitched together, but that’s not quite the case for “diffusion models” like OpenAl’s SORA and Google’s VEO 2.

Instead of producing a video frame-by-frame (or “autoregressively”), these systems process the entire sequence at once. The resulting clip is often photorealistic, but the process is slow and doesn’t allow for on-the-fly changes.

Scientists from MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and Adobe Research have now developed a hybrid approach, called “CausVid,” to create videos in seconds. Much like a quick-witted student learning from a well-versed teacher, a full-sequence diffusion model trains an autoregressive system to swiftly predict the next frame while ensuring high quality and consistency. CausVid’s student model can then generate clips from a simple text prompt, turning a photo into a moving scene, extending a video, or altering its creations with new inputs mid-generation.

This dynamic tool enables fast, interactive content creation, cutting a 50-step process into just a few actions. It can craft many imaginative and artistic scenes, such as a paper airplane morphing into a swan, woolly mammoths venturing through snow, or a child jumping in a puddle. Users can also make an initial prompt, like “generate a man crossing the street,” and then make follow-up inputs to add new elements to the scene, like “he writes in his notebook when he gets to the opposite sidewalk.”

The CSAIL researchers say that the model could be used for different video editing tasks, like helping viewers understand a livestream in a different language by generating a video that syncs with an audio translation. It could also help render new content in a video game or quickly produce training simulations to teach robots new tasks.

Tianwei Yin SM ’25, PhD ’25, a recently graduated student in electrical engineering and computer science and CSAIL affiliate, attributes the model’s strength to its mixed approach.

“CausVid combines a pre-trained diffusion-based model with autoregressive architecture that’s typically found in text generation models,” says Yin, co-lead author of a new paper about the tool. “This AI-powered teacher model can envision future steps to train a frame-by-frame system to avoid making rendering errors.”

Yin’s co-lead author, Qiang Zhang, is a research scientist at xAI and a former CSAIL visiting researcher. They worked on the project with Adobe Research scientists Richard Zhang, Eli Shechtman, and Xun Huang, and two CSAIL principal investigators: MIT professors Bill Freeman and Frédo Durand.

Caus(Vid) and effect

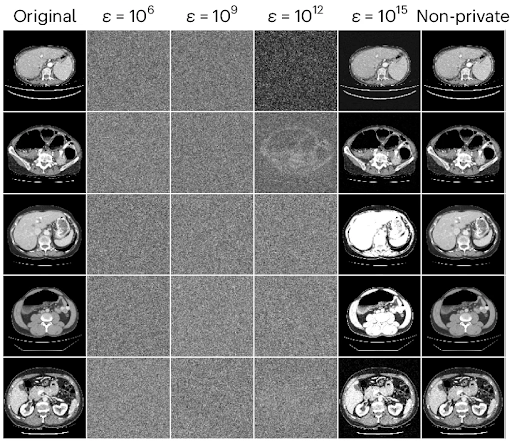

Many autoregressive models can create a video that’s initially smooth, but the quality tends to drop off later in the sequence. A clip of a person running might seem lifelike at first, but their legs begin to flail in unnatural directions, indicating frame-to-frame inconsistencies (also called “error accumulation”).

Error-prone video generation was common in prior causal approaches, which learned to predict frames one by one on their own. CausVid instead uses a high-powered diffusion model to teach a simpler system its general video expertise, enabling it to create smooth visuals, but much faster.

CausVid displayed its video-making aptitude when researchers tested its ability to make high-resolution, 10-second-long videos. It outperformed baselines like “OpenSORA” and “MovieGen,” working up to 100 times faster than its competition while producing the most stable, high-quality clips.

Then, Yin and his colleagues tested CausVid’s ability to put out stable 30-second videos, where it also topped comparable models on quality and consistency. These results indicate that CausVid may eventually produce stable, hours-long videos, or even an indefinite duration.

A subsequent study revealed that users preferred the videos generated by CausVid’s student model over its diffusion-based teacher.

“The speed of the autoregressive model really makes a difference,” says Yin. “Its videos look just as good as the teacher’s ones, but with less time to produce, the trade-off is that its visuals are less diverse.”

CausVid also excelled when tested on over 900 prompts using a text-to-video dataset, receiving the top overall score of 84.27. It boasted the best metrics in categories like imaging quality and realistic human actions, eclipsing state-of-the-art video generation models like “Vchitect” and “Gen-3.”

While an efficient step forward in AI video generation, CausVid may soon be able to design visuals even faster — perhaps instantly — with a smaller causal architecture. Yin says that if the model is trained on domain-specific datasets, it will likely create higher-quality clips for robotics and gaming.

Experts say that this hybrid system is a promising upgrade from diffusion models, which are currently bogged down by processing speeds. “[Diffusion models] are way slower than LLMs [large language models] or generative image models,” says Carnegie Mellon University Assistant Professor Jun-Yan Zhu, who was not involved in the paper. “This new work changes that, making video generation much more efficient. That means better streaming speed, more interactive applications, and lower carbon footprints.”

The team’s work was supported, in part, by the Amazon Science Hub, the Gwangju Institute of Science and Technology, Adobe, Google, the U.S. Air Force Research Laboratory, and the U.S. Air Force Artificial Intelligence Accelerator. CausVid will be presented at the Conference on Computer Vision and Pattern Recognition in June.