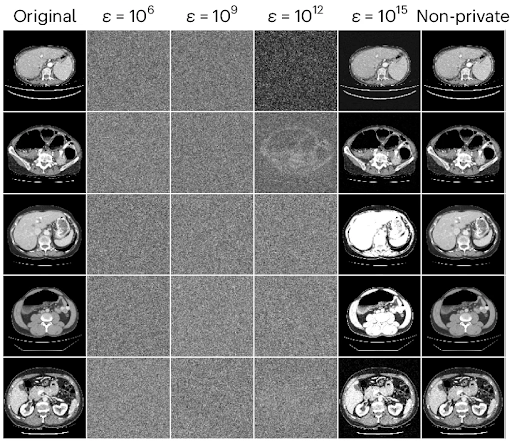

AI systems in health use a lot of sensitive information. This information includes electronic health records (EHRs), images, patient details, and other Protected Health Information (PHI). Unlike old healthcare systems, AI often uses cloud computing, data sharing, and automatic data processing. These methods can increase the chance of patient data being exposed.

Privacy problems can happen in several ways:

- Unauthorized Access: Hackers or bad insiders might get into systems that hold PHI and steal information.

- Data Misuse: Sometimes AI uses data in ways that were not planned or told to patients, which raises ethical worries.

- Cloud Security: Many AI tools rely on cloud services, causing extra security risks like data leaks and weak spots.

- Third-Party Risks: Companies that provide AI tools often have access to PHI, making it harder to protect data across many organizations.

Because of these risks, healthcare groups must use multiple defenses and follow rules carefully.

HIPAA Compliance and Its Role in Safeguarding AI-Driven Systems

In the U.S., HIPAA is the main set of rules to protect patient information. Following HIPAA means obeying its four main parts:

- Privacy Rule: Decides who can see or share PHI.

- Security Rule: Requires steps like encrypting data, controlling access, and doing regular audits.

- Breach Notification Rule: Says that if data is leaked, patients and authorities must be told quickly.

- Enforcement Rule: Explains punishments and investigations if the rules are broken.

Medical leaders and IT teams should know that breaking HIPAA can lead to fines up to $1.5 million each year for each type of violation. There can also be criminal charges. Besides money penalties, data leaks can harm a company’s reputation and lose patient trust.

To follow HIPAA in AI settings, healthcare providers must always check risks, update security, and build a workplace where privacy is important to everyone. When everyone shares the duty to keep data safe, security becomes part of the culture, not just a rule.

Implementing Technical Safeguards for AI Privacy Protection

Technology helps a lot in keeping PHI safe in AI systems. Some key steps are:

- Data Encryption: Encrypting data when stored and sent keeps it unreadable if stolen.

- Access Controls: Using multiple factors to verify users, giving access only by roles, and reviewing permissions often limits who can see sensitive info.

- Continuous Monitoring: Watching user actions in real time helps catch unusual access early.

- Secure Data Storage: AI tools using cloud services should pick providers with strong security standards like HITRUST certification.

- Incident Response Planning: Being ready to quickly investigate and report security problems follows HIPAA rules and keeps patients informed.

AI-based cybersecurity tools can support these efforts by checking risks automatically, spotting threats early, and showing system weaknesses. For example, AI risk platforms watch data all the time, flag suspicious actions, and help prevent big breaches.

Addressing Algorithmic Bias and Ethical Governance in AI

Privacy protection is not enough to build trust in AI healthcare. Bias in AI and ethics also matter to privacy. Bias happens when the data AI learns from does not include all groups fairly or reflects past inequalities. This can cause unfair treatment, especially for certain patient groups.

To fix bias and keep ethics, healthcare groups should:

- Use training data that covers diverse populations.

- Have teams with clinical and ethical experts regularly check AI results.

- Use Explainable AI (XAI) so doctors know how AI makes decisions.

- Talk openly with patients about how AI helps and protects their data.

If AI is not clear, people may not trust it or want to use it. A 2025 survey showed over 60% of healthcare workers are unsure about using AI due to data security and lack of clear information.

Regulatory Challenges and Industry Standards in AI Healthcare Compliance

One challenge is that AI technology changes faster than the rules can keep up. Groups like the FDA and European Commission are making new guidelines, but current rules mostly check if systems are accurate and less on patient outcomes or strong data protection.

Jeremy Kahn from Fortune says many AI systems get approved based on past data without proof they help patients in real life. This gap means providers need to be extra careful and use strong privacy and ethical rules beyond what the law requires.

Programs like HITRUST’s AI Assurance give ways to manage AI security risks openly with providers like AWS, Microsoft, and Google working together.

AI-Driven Automation and Workflow Optimization for Data Security

AI not only helps clinical care but also automates office tasks. Robotic Process Automation (RPA) is used for billing, appointments, claims, and answering patient questions.

With AI automation, medical offices can:

- Cut down on manual data work, reducing human mistakes that could break privacy rules.

- Make access controls stronger by watching user behavior and flagging risky actions.

- Automate audits and compliance reports to save time.

- Detect incidents earlier with continuous AI monitoring.

Integrating AI tools with Electronic Health Records (EHR) and phone services helps lower staff workload and keeps privacy rules tight. Some companies offer AI phone automation that talks to patients while protecting their information. These services help protect privacy and improve patient experience.

Managing Third-Party and Vendor Risks in AI Healthcare Solutions

Many medical offices depend on third-party vendors for AI software, telemedicine, cloud storage, and security. Each outside group adds complexity in protecting patient data.

Healthcare leaders should:

- Do thorough risk checks using AI tools that speed up security questions and summarize vendor compliance.

- Watch vendor performance carefully to find and fix risks caused by their subcontractors.

- Make sure vendors follow HIPAA and security rules and sign contracts that cover data protection and breach reporting.

AI helps with vendor checks, but human review is still very important to keep strong oversight and protect patients.

Staff Training and Organizational Culture for Sustained Privacy Protection

Training employees is key to any privacy plan. Everyone—from front desk workers to doctors, IT staff, and managers—needs regular lessons on:

- HIPAA rules and penalties.

- How to spot phishing and other attacks on healthcare data.

- Best methods for passwords, encryption, and secure communication.

- How to report incidents quickly and clearly.

Training through webinars, workshops, and practice breach drills helps make privacy a shared goal, not just a rule to follow.

Looking Ahead: Future Directions for AI Privacy in U.S. Healthcare

Privacy risks in AI healthcare are complex and change all the time. Future steps must include:

- New technologies like Explainable AI (XAI) for better transparency.

- Stronger, clearer regulations focused on real patient results and solid data protection.

- Industry standards made by healthcare providers, developers, policymakers, and payers working together.

- Better cybersecurity programs using AI to both improve care and protect data.

By using these strategies, medical groups can keep sensitive patient data safe, follow the law, and build trust for wider use of AI healthcare tools.

Frequently Asked Questions

What are the primary privacy concerns when using AI in healthcare?

AI in healthcare relies on sensitive health data, raising privacy concerns like unauthorized access through breaches, data misuse during transfers, and risks associated with cloud storage. Safeguarding patient data is critical to prevent exposure and protect individual confidentiality.

How can healthcare organizations mitigate privacy risks related to AI?

Organizations can mitigate risks by implementing data anonymization, encrypting data at rest and in transit, conducting regular compliance audits, enforcing strict access controls, and investing in cybersecurity measures. Staff education on privacy regulations like HIPAA is also essential to maintain data security.

What causes algorithmic bias in AI healthcare systems?

Algorithmic bias arises primarily from non-representative training datasets that overrepresent certain populations and historical inequities embedded in medical records. These lead to skewed AI outputs that may perpetuate disparities and unequal treatment across different demographic groups.

What are the impacts of algorithmic bias on healthcare equity?

Bias in AI can result in misdiagnosis or underdiagnosis of marginalized populations, exacerbating health disparities. It also erodes trust in healthcare systems among affected communities, discouraging them from seeking care and deepening inequities.

What strategies help reduce bias in AI healthcare applications?

Inclusive data collection reflecting diverse demographics, continuous monitoring and auditing of AI outputs, and involving diverse stakeholders in AI development and evaluation help identify and mitigate bias, promoting fairness and equitable health outcomes.

What are major barriers to patient trust in AI healthcare technologies?

Key barriers include fears about device reliability and potential diagnostic errors, lack of transparency in AI decision-making (‘black-box’ concerns), and worries regarding unauthorized data sharing or misuse of personal health information.

How can trust in AI systems be built among patients and providers?

Trust can be built through transparent communication about AI’s role as a clinical support tool, clear explanations of data protections, regulatory safeguards ensuring accountability, and comprehensive education and training for healthcare providers to effectively integrate AI into care.

What are the challenges in regulating AI for healthcare applications?

Regulatory challenges include fragmented global laws leading to inconsistent compliance, rapid technological advances outpacing regulations, and existing approval processes focusing more on technical performance than proven clinical benefit or impact on patient outcomes.

How can regulatory frameworks better ensure the ethical use of AI in healthcare?

By setting standards that require AI systems to demonstrate real-world clinical efficacy, fostering collaboration among policymakers, healthcare professionals, and developers, and enforcing patient-centered policies with clear consent and accountability for AI-driven decisions.

What role does purpose-built AI play in ethical healthcare innovation?

Purpose-built AI systems, designed for specific clinical or operational tasks, must meet stringent ethical standards including proven patient outcome improvements. Strengthening regulations, adopting industry-led standards, and collaborative accountability among developers, providers, and payers ensure these tools serve patient interests effectively.

The post Comprehensive Strategies for Mitigating Privacy Risks in AI-Driven Healthcare Systems to Safeguard Sensitive Patient Data and Ensure Compliance first appeared on Simbo AI – Blogs.