This article is a part of your HHCN+ Membership

On stage and in the hallways at our Capital+Strategy conference this week in Charlotte, AI was on everyone’s lips – but not in the ways I’ve previously heard.

AI has already moved from a theoretical future to an actionable pathway. But conversations have moved from fear of AI risk to acceptance of AI and even trust in AI as a de-risking tool. More than I’ve heard before, providers agreed that AI is more reliable than humans in many domains.

The tension came when I heard some providers highlight the moral, relational and trust concerns relating to AI. Opinions differed on whether AI should be used in more human-focused contexts and on how clinicians, caregivers, clients and patients would respond to its use. These conversations matter more than ever now that AI has been heralded as the answer to the industry’s staffing and efficiency challenges.

In this week’s exclusive, members-only HHCN+ Update, I round up my key AI-focused takeaways from Capital+Strategy, offering analysis and key takeaways, including:

– Why providers’ trust in AI is on the rise and what that means for operations

– The moral AI debate

– Where caregivers’ and clinicians’ interests lie

The rapid evolution

I often think about risk when talking about AI. I’ve played around with some of the common hallucination-inducing prompts for AI tools, and you can send the AI into a tailspin just by asking it to generate a seahorse emoji. When thinking about applying AI to health care, these examples move to the front of my mind. And providers are aware of the risks, with some crafting AI-specific policies to ensure compliance.

At Capital+Strategy, it was notable to me that fewer providers were focused on risk in AI discussions. Providers were instead focused on how quickly AI has become significantly more powerful, therefore offering a level of reliability that humans cannot replicate.

Wrapping your head around this rapid improvement is difficult, according to David Bell, the founder and CEO of Grandcare Health, because people often struggle to “[understand] exponential growth.” The systems available a year ago were about a tenth as good as the ones available now, he said.

“We can already use these systems to prevent the dumb, little, stupid paper cuts that make our lives miserable all day long. But every year it’s going to be capable of higher and higher level things,” Bell said on a panel.

Other leaders struck similar notes.

“We’re seeing AI able to answer incoming phone calls and represent your company for the very first time with a customer,” Jeff Salter, founder and CEO of Caring Senior Service, said. “The things we’re seeing are that they’re doing better than humans. They do a better job of understanding the needs of a client, and I think that’s something that most people in this room a year ago would have said, ‘Now, that’s never going to get replaced.’ And we’re seeing it’s doing a better job. There are just a lot of opportunities that are not just back office, but now it’s considered almost front office.”

Still, providers have to be ready for when something does go wrong, Salter said. He recommended providers engage a public relations company as soon as they begin using AI to be prepared to handle any AI missteps.

Ralph Laughton, the CEO and head of strategic partnerships at Heart, Body & Mind Home Care, said he has turned 180 degrees on AI in the last year or so, and has now embraced the technology and is “all in.” Still, he outlined one of the risks that he is concerned about.

“If we use an ambient listening device in somebody’s home overnight … then are we taking on the responsibility of making sure that if something happens overnight, we’re responsive, and if we’re not, is that a problem for us?” Laughton asked. “I would not want to take on additional risk, or I would want to do something that limits exposure to that. So maybe a waiver.”

After this event, it’s clear to me that Salter is right about providers’ changing perceptions of AI. Providers now see AI as a de-risking tool in certain scenarios, rather than a questionable leap into unknown technology.

Trusting AI

But while general enthusiasm for AI was palpable, differences of opinion still drove some debates. Providers all agreed that home-based care is a people business and that AI is an important part of protecting the sustainability of the business – but divisions emerged on how AI plays into the people element.

In my personal life, I am encountering many fewer concerns about a “2001: A Space Odyssey”- like robot takeover attempt now than I did just two or three years ago. And research shows that folks have increasingly bought into AI, even when it comes to their health.

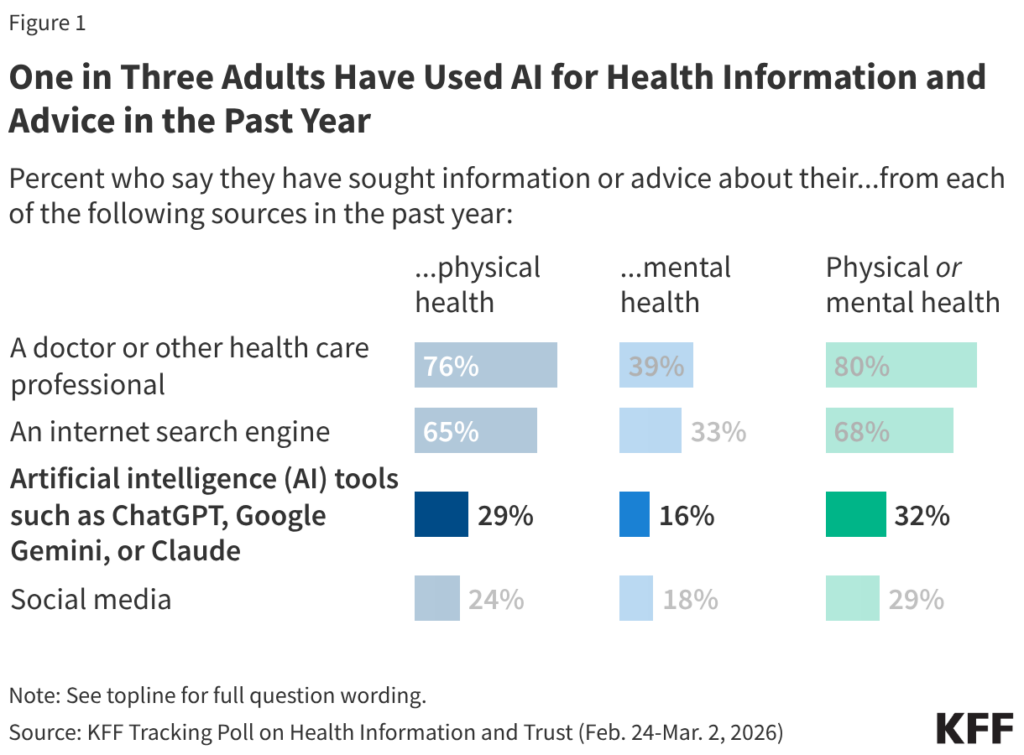

New research published on Wednesday by KFF found that about a third of adults use AI for health information and advice. This is huge – it demonstrates that the cutting-edge AI health care tools that will likely be part of the home-based care industry’s AI transformation are gaining buy-in from a large portion of the population.

Interestingly, the same survey found that 77% of the public expressed concern about data privacy when sharing personal medical information provided to AI tools – but that 41% of those who have used AI for physical or mental health information and advice say they’ve uploaded personal medical information into an AI tool or chatbot.

So the “Space Odyssey” concerns may not be totally vanquished, but they aren’t stopping a large swath of the population from accepting AI’s role in their health journey. But the tension between increasing use of AI amid ongoing data privacy concerns was also evident at Capital+Strategy, particularly in providers’ moral quandary about the extent to which they should use AI to communicate with clients or patients, or caregivers and clinicians.

“This is just a concern I have with where we’re going,” Bob Roth, managing partner of Cypress Home Care, said on a panel. “Because we’re trying to do more with less, and a lot of people are utilizing machines and AI to communicate with our caregivers, and I feel like that’s not the right path we should be taking. We should be building relationships.”

Other people I talked with pointed out that AI serves as an equalizer for the people behind home-based care. Salter said he was convinced to use caregiver-screening AI tools after hearing this story:

“A young lady received a call from an AI agent screening her for a job interview,” Salter recounted. “Her impression was that she really appreciated the AI interaction, and it was because she suffered from a lisp and in her normal interactions with humans, she was immediately discounted for a job. Highly qualified, but immediately discounted. So when AI can do things like that, take an individual and get past the bias, [that is] winning.”

Not all caregivers will be wild about AI, however, according to Megan Casey, the senior vice president of Live Well Home Care.

“Last year, if you would have asked me if technology and AI has a place in caregivers and clients, I would have said, when you can teach a computer how to have empathy, call me,” Casey said. “And then somebody called my bluff and called me back and said, ‘Well, this computer has empathy, learned empathy.’ Caregivers don’t want learned empathy. They don’t want to talk to a computer. They want to talk to a real person who understands them, that remembers that it’s their birthday, or their child’s getting ready to go back to school, or they’re afraid of large dogs, or they hate smokers.”

I haven’t found specific data about how caregivers and clinicians feel about AI implementation, but no doubt such information will be forthcoming, and I’m interested in hearing from any providers that have surveyed their own workforce about AI being used in caregiver support. My takeaway from Capital+Strategy is that, at a minimum, providers should over-communicate about the implementation of internal AI tools and be ready to field concerns from their workers.

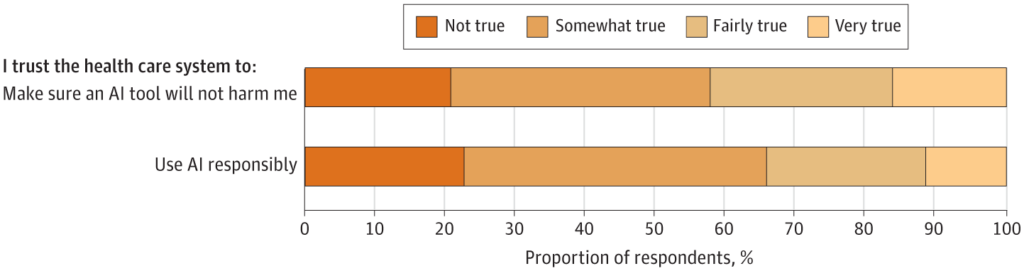

When it comes to whether a patient will trust the AI agent or their home-based care provider, the story is a bit murky. A study published in JAMA (in February 2025, so keep a grain of salt that folks’ impressions may have changed) found that most of its respondents – 65.8% – reported low trust in their health care system to use AI responsibly and most – 57.7% – also reported low trust that their health care system would make sure an AI tool would not harm them.

So, even as the providers at Capital+Strategy expressed increasing trust in AI, the findings suggest that they still should exercise caution in launching patient-facing AI tools, and should take pains to explain why AI tools that are in use can be trusted to safeguard sensitive patient data.

My last notable AI takeaway from Capital+Strategy is no surprise to providers: the sea of AI tools is deep and crowded. As operators grapple with how to engage with AI, they must ensure that the tools they use solve a problem they actually have, to avoid wasted investment and the potential to erode caregiver or client trust.

“You have to define the problem before you find the solution,” Sherry Kesler, the vice president of post-acute care services at West Virginia University Health, said. “A lot of people want to go for the AI solution before they even know what their problem is, and then they’re picking the wrong solution, and then they don’t integrate it into their current workflows.”

The post ‘Doing Better Than Humans’: At-Home Care Providers Reframe AI Risk, Trust, Reliability appeared first on Home Health Care News.